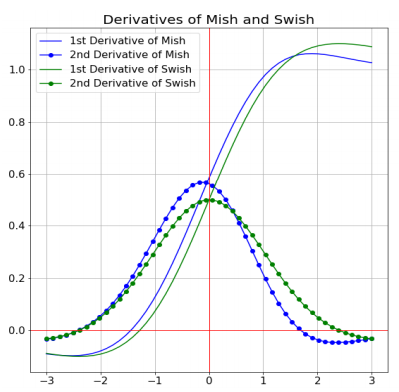

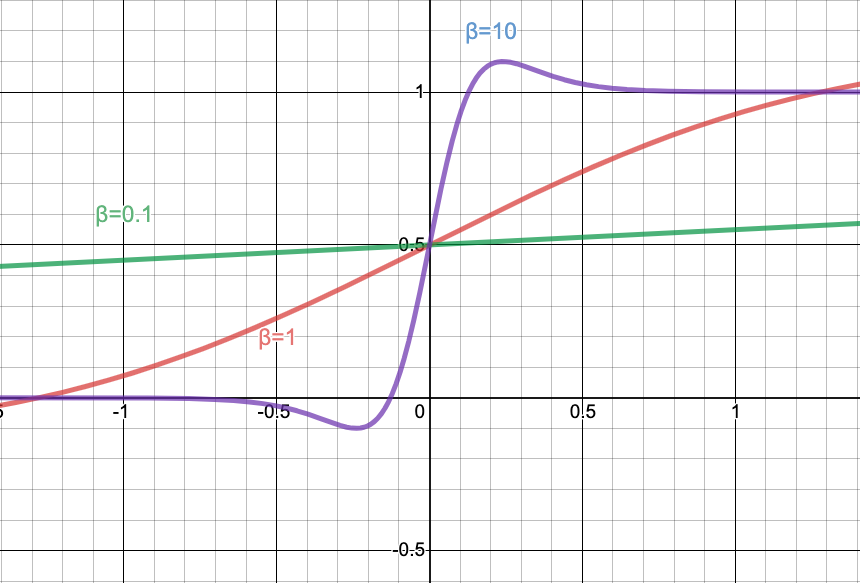

Crafting the neural architecture search space is an important part of this approach and centers around the inclusion of neural network operations that are known to improve hardware utilization. The accelerator-aware AutoML approach substantially reduces the manual process involved in designing and optimizing neural networks for hardware accelerators. The Edge TPU in Pixel 4 is similar in architecture to the Edge TPU in the Coral line of products, but customized to meet the requirements of key camera features in Pixel 4. The combination of LR-SPP and MobileNetV3 reduces the latency by over 35% on the high resolution Cityscapes Dataset. This new decoder contains three branches, one for low resolution semantic features, one for higher resolution details, and one for light-weight attention. In order to optimize MobileNetV3 for efficient semantic segmentation, we introduced a low latency segmentation decoder called Lite Reduced Atrous Spatial Pyramid Pooling (LR-SPP). In addition to classification models, we also introduced MobileNetV3 object detection models, which reduced detection latency by 25% relative to MobileNetV2 at the same accuracy for the COCO dataset. MobileNetV3 Object Detection and Semantic Segmentation So, instead we use an approximation that can be efficiently expressed as a product of two piecewise linear functions. The critical drawback of the Swish function is that it is very inefficient to compute on mobile hardware. First, we introduce a new activation function called hard-swish (h-swish) which is based on the Swish nonlinearity function. The MobileNetV3 search space builds on multiple recent advances in architecture design that we adapt for the mobile environment. latency for mobile models on the ImageNet classification task using the Google Pixel 4 CPU. To provide the best possible performance under different conditions we have produced both large and small models.Ĭomparison of accuracy vs. Then we fine-tune the architecture using NetAdapt, a complementary technique that trims under-utilized activation channels in small decrements. First, we search for a coarse architecture using MnasNet, which uses reinforcement learning to select the optimal configuration from a discrete set of choices. To most effectively exploit the search space we deploy two techniques in sequence - MnasNet and NetAdapt. In contrast with the hand-designed previous version of MobileNet, MobileNetV3 relies on AutoML to find the best possible architecture in a search space friendly to mobile computer vision tasks. On the Pixel 4 Edge TPU hardware accelerator, the MobileNetEdgeTPU model pushes the boundary further by improving model accuracy while simultaneously reducing the runtime and power consumption. On mobile CPUs, MobileNetV3 is twice as fast as MobileNetV2 with equivalent accuracy, and advances the state-of-the-art for mobile computer vision networks. These models are the culmination of the latest advances in hardware-aware AutoML techniques as well as several advances in architecture design. Today we are pleased to announce the release of source code and checkpoints for MobileNetV3 and the Pixel 4 Edge TPU-optimized counterpart MobileNetEdgeTPU model. Similarly, algorithms, such as MobileNets, have been critical for the success of on-device ML by providing compact and efficient neural network models for mobile vision applications. The recently launched Google Pixel 4 exemplifies this trend, and ships with the Pixel Neural Core that contains an instantiation of the Edge TPU architecture, Google’s machine learning accelerator for edge computing devices, and powers Pixel 4 experiences such as face unlock, a faster Google Assistant and unique camera features. This need to bring on-device machine learning to compute and power-limited devices has spurred the development of algorithmically-efficient neural network models and hardware capable of performing billions of math operations per second, while consuming only a few milliwatts of power. On-device machine learning (ML) is an essential component in enabling privacy-preserving, always-available and responsive intelligence. Posted by Andrew Howard and Suyog Gupta, Software Engineers, Google Research

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed